More about AWS Costs

- AWS Data Transfer Pricing: Hidden Network Transfer Costs and What to Do About Them

- Understanding AWS Total Cost of Ownership (TCO)

- AWS Cost Optimizations: Tools, Checklist, and Best Practices

- AWS Storage Gateway Pricing Explained

- AWS Snowball Pricing Simplified

- AWS Calculator: Step By Step

- EFS Pricing Explained

- AWS RDS Pricing Explained

- AWS Cost Management: 9 Free Tools to Help Cut Your Costs

- AWS Storage Costs: All in One Place

- AWS Cost Saving Guidebook Shows How You Can Optimize EBS Costs

- AWS EBS Snapshot Pricing Vs. Azure & Cloud Volumes ONTAP

- Find and Optimize Your AWS Storage Costs for AWS EBS and More

- Control EBS Costs: How to Find and Delete Unused AWS EBS Volumes Using a Lambda Function

Subscribe to our blog

Thanks for subscribing to the blog.

April 2, 2017

Topics: Cloud Volumes ONTAP Storage EfficienciesAWSAdvanced4 minute read

It's true, organizations decide to migrate to the cloud because they like the cloud’s scalability, flexibility, performance, and security of services like AWS EBS. But ultimately, the deciding factor is the cost savings that the move offers. However, merely migrating to the cloud doesn't guarantee AWS cost optimization.

If an organization makes the move to the cloud and fails to understand the pricing mechanism or how to adjust it for their use case, they may end up paying the same as or even more than they did using a traditional infrastructure.

One reason for incurring extra cost is the underutilization and over-provisioning of Amazon Elastic Block Storage (EBS) volumes. How can users control Amazon EBS costs?

A good solution for this problem is to find and delete the unused Amazon EBS volumes.

In this article we will show you how to automate a process that will find the unutilized volumes and delete them.

An Overutilization Use Case

One of the key cloud storage offerings on Amazon Web Services is the Amazon EBS volume.

Amazon EBS offers persistent storage, and each volume comes with a “DeleteOnTermination” flag that, if marked false, will not delete the volume on instance termination.

Take, for example, a use case of a company that has set up Auto Scaling and that is faced with a major outage with their database. The outage stopped their app server from working and it caused Auto Scaling, which had been checking the health of Amazon EC2 instances, to begin terminating those instances and start launching new instances.

This process continued for a few hours, resulting in the launch of more than 50 new Amazon EC2 instances. Each instance had an Amazon EBS volume of 200 GB and none of them were deleted on instance termination.

As a result, at the end of this outage, the organization had more than 10,000 GB of unutilized storage. These unutilized instances were costing an additional $1000 per month, without contributing to the organization’s operations. Annually, these Amazon EBS costs would be unmanageable.

The Unutilized Volumes Solution

To solve a non-utilization problem such as the one described above, the extra volumes have to be deleted. Below, you can read more on how to write a script that will help automate the task of finding unutilized volumes (any volume in an available state) and deleting them.

Before you begin to delete any volumes though, it is necessary to verify which volumes are important and which ones are not.

Before you begin to delete any volumes though, it is necessary to verify which volumes are important and which ones are not.

If there is an important volume, you should create a backup with a snapshot. You can also assign a special tag for the volume, such as “DND” (Do Not Delete).

In the script demonstrated below, we are going to run a program which will find all volumes that are in an available, unattached state. That script will filter out the volumes with the “DND” tag and delete the rest.

One way to perform such a task is to write a program using the AWS Command Line Interface (CLI). Then make a script (.sh for Linux) that can be scheduled to execute using cron job.

Another solution is to use AWS SDK and schedule it using Cloudwatch triggers and execution by Lambda.

For this guide, we have written a code in Python that can be executed automatically using Lambda, that will remove any unutilized volumes.

How to Automatically Filter and Delete EBS Volumes with Lambda Functions and CloudWatch

Step 1: Get Started by Opening AWS Lambda

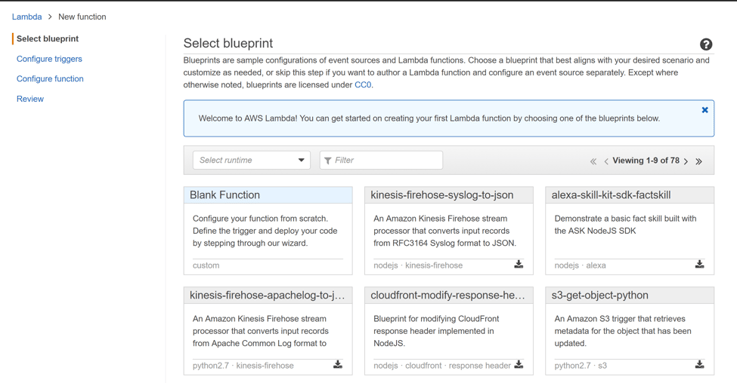

Step 2: Create a Lambda Function

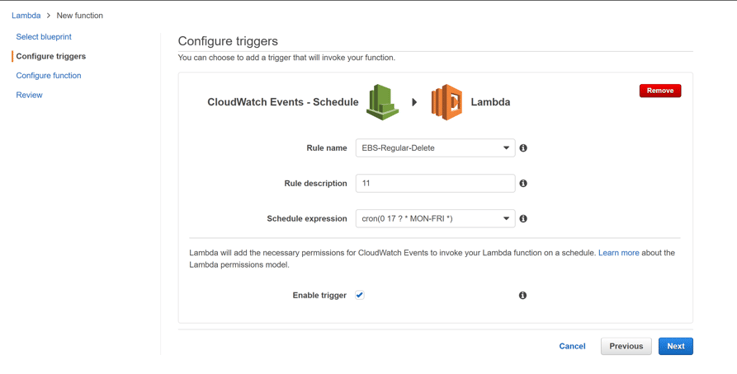

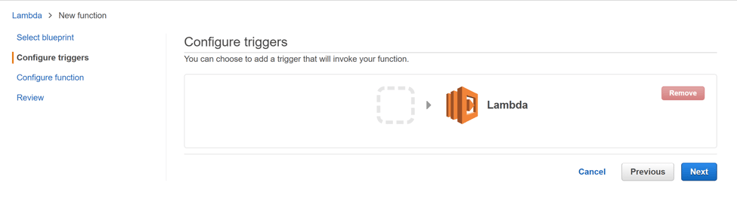

Step 3: Click on the Empty Box and Select CloudWatch Schedule

Step 4: Schedule the Function by Specifying Cron Expression

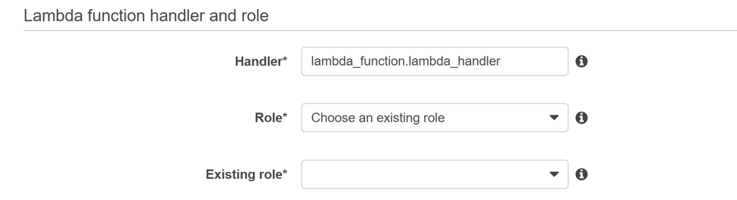

Step 5: Assign a Role with Necessary Permissions

Step 6: Paste the Following Code Snippet After the Trigger is Created.

Code Snippet:

import boto3

ec2 = boto3.resource('ec2',region_name='us-east-1')

def lambda_handler(event, context):

for vol in ec2.volumes.all():

if vol.state=='available':

if vol.tags is None:

vid=vol.id

v=ec2.Volume(vol.id)

v.delete()

print "Deleted " +vid

continue

for tag in vol.tags:

if tag['Key'] == 'Name':

value=tag['Value']

if value != 'DND' and vol.state=='available':

vid=vol.id

v=ec2.Volume(vol.id)

v.delete()

print "Deleted " +vid

You can also download the script here

Important Note: This code searches only for “DND” and deletes remaining volumes that are in an available state. The code is written in Python, so do follow the indentation correctly.

Lower Amazon EBS Costs with Automation

The script shown above can be scheduled and will help remove unutilized resources and eventually save money.

In the use case discussed above, the organization incurred a huge cost. After automation, they were able to save costs and avoid wasting resources and funds.

It is important to remember, that while automation will help reduce costs, data management will still be a challenge. Automation is the best way to reduce any manual errors, or dependency in deleting unutilized volumes.

Knowing the data life cycle and performing necessary checks on the process integration will let you get a good night’s sleep knowing that you aren’t paying for services you don’t use.One way you can do that is with NetApp Cloud Volumes ONTAP, the data management platform for AWS storage resources. Cloud Volumes ONTAP is managed through the Cloud Manager GUI, which provides an easy way to see and control all of your storage volumes, whether they're on EBS, Amazon S3, on-prem, or in Azure or Google Cloud. Its reporting features allow you to see the usage patterns of your data over time, and using the RESTful API, you can provision, back up, and most importantly, delete, storage volumes as needed.