More about AWS Big Data

- AWS Snowball vs Snowmobile: Data -Migration Options Compared

- AWS Snowball Edge: Data Shipping and Compute at the Edge

- AWS Snowmobile: Migrate Data to the Cloud With the World’s Biggest Hard Disk

- AWS Snowball Family: Options, Process, and Best Practices

- MongoDB on AWS: Managed Service vs. Self-Managed

- Cassandra on AWS Deployment Options: Managed Service or Self-Managed?

- Elasticsearch in Production: 5 Things I Learned While Using the Popular Analytics Engine in AWS

- AWS Data Lake: End-to-End Workflow in the Cloud

- AWS ElastiCache for Redis: How to Use the AWS Redis Service

- AWS Data Analytics: Choosing the Best Option for You

- AWS Big Data: 6 Options You Should Consider

Subscribe to our blog

Thanks for subscribing to the blog.

January 3, 2022

Topics: Cloud Volumes ONTAP Data MigrationAWSElementary6 minute read

What is AWS Snowball?

AWS Snowball, one of the tools within the AWS Snow Family, is known as an edge storage, edge computing, and a data migration device, that is available in two options:

- Snowball Edge Storage Optimized devices have 40 vCPUs and up to 80 TB usable storage (either object or block storage). They are suitable for large scale information transfer and local storage.

- Snowball Edge Compute Optimized devices offer 52 vCPUs, object and block storage, and the option of GPUs for use cases such including full-motion video analysis and advanced machine learning via disconnected environments.

You can use such devices for storage, machine learning, data processing, and data collection in environments with unsteady connectivity (industrial, transportation, and manufacturing) or remote places (such as maritime or military operations). You may also rackmount and cluster these devices together to accommodate larger data volumes.

A Snowball device can run EC2 instances and AWS Lambda functions. This lets you create applications, test them in the cloud, and then run them in remote locations with limited Internet connectivity.

This is part of our series of articles about AWS big data.

In this article:

- The AWS Snowball Product Family

- How Does AWS Snowball Work?

- Best Practices for AWS Snowball

- AWS Storage Optimization with Cloud Volumes ONTAP

The AWS Snowball Product Family

AWS Snowball

AWS Snowball refers to the general service, and Snowball Edge are the types of devices that the service employs—often referred to as AWS Snowball devices. Initially, Snowball hardware designs were for information transport alone. Snowball Edge has the added ability to execute computing locally, even if there is no available network connection.

Snowball Edge Storage Optimized

Snowball Edge Storage Optimized is the best choice if you need to speedily and securely transfer dozens of terabytes or petabytes of information to AWS. It is also a suitable choice for running general analysis, including IoT information aggregation and transformation.

Specifications:

- 80 TB of HDD storage

- 1 TB of SATA SSD storage

- 40 vCPUs

- Up to 40 GB network bandwidth

Snowball Edge Compute Optimized

Snowball Edge Compute Optimized is suitable for use cases that need access to high-speed storage, and powerful compute for information processing before moving the data into AWS. These use cases are, for example, IoT information analytics, high-resolution video processing, and real-time machine learning at the edge.

Specifications:

- 42 TB of HDD storage

- 68 TB of NVMe SSD storage

- 52 vCPUs

- Up to 100 GB network bandwidth

Learn more in our detailed guide to AWS Snowball Edge

How Does AWS Snowball Work?

Importing Data With AWS Snowball

Image Source: AWS

Image Source: AWS

Every import job utilizes one Snowball appliance. You initiate a job via the job management API or Snow Management Console, and Amazon ships you a Snowball device. It should arrive after a few days. You then connect the Snowball to your network and use the S3 Adapter or Snowball client to move your data.

Once you have finished transferring the information, you return the Snowball to AWS, and AWS will import your information into Amazon S3 Storage.

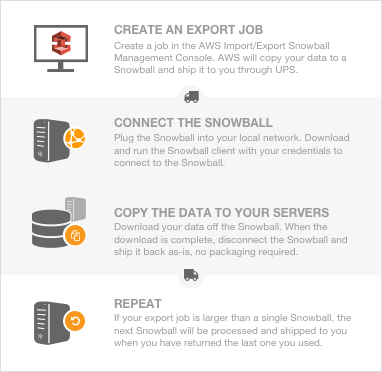

Exporting Data with AWS Snowball

Image Source: AWS

Image Source: AWS

Every export job can utilize several Snowball appliances. Once you create a task in the job management API or AWS Snow Family Management Console, Amazon initiates a listing operation in S3. This listing operation divides your job into sections. Each job section may be up to approximately 80 TB in size and has one Snowball connected to it. The first job then starts the Preparing Snowball stage.

Following this, AWS begins exporting your information onto a Snowball. Exporting your data generally takes one business day but may take longer. After the export is complete, AWS ensures the Snowball device is ready, so the region’s carrier can pick it up. When Amazon brings the Snowball to the office or data center after a few days, you will connect the Snowball to your network and move the information you wish to export to the servers via the S3 Adapter for Snowball or via the Snowball client.

After you have finished transferring your information, you can ship the Snowball back to AWS. After AWS receives the Snowball device back, AWS carries out a thorough erasure of the Snowball. The erasure adheres to the National Institute of Standards and Technology 800-88 standards. If there are additional job sections, the process is repeated for each additional job section.

Related content: For very large data transfers, AWS provides the Snowmobile service. Read our guide to AWS Snowball vs Snowmobile

Best Practices for AWS Snowball

Here are a few best practices that can help you make more effective use of the AWS Snowball family of products:

- Security—be alert to anything suspicious that might indicate the Snowball device has been tampered with, and notify Amazon. Avoid saving your Snowball unlock code in the same place as the job manifest. After a Snowball data transfer completes, delete local log files as they may contain sensitive information.

- Networking—use a powerful workstation as the local host for transferring data to Snowball. If possible, copy all the data to the workstation to conserve network bandwidth. If not, batch your local cache to speed up the copy operation.

- Data transfer—Snowball supports concurrency, so you can use multiple instances of the Snowball client in parallel to speed up data transfer. Never disconnect the Snowball device while data transfer is in progress. Never modify files while transferring data—the Snowball client will not copy files being modified. Amazon recommends having a maximum of 500K items within any directory copied to Snowball.

- Job management—remember you have ten days to perform the data transfer after the Snowball device is shipped. When performing an import job, keep local copies of your data, until the Snowball device is shipped back to Amazon and data is imported into S3.

AWS Storage Optimization with Cloud Volumes ONTAP

NetApp Cloud Volumes ONTAP, the leading enterprise-grade storage management solution, delivers secure, proven storage management services on AWS, Azure and Google Cloud. Cloud Volumes ONTAP capacity can scale into the petabytes, and it supports various use cases such as file services, databases, DevOps or any other enterprise workload, with a strong set of features including high availability, data protection, storage efficiencies, Kubernetes integration, and more.

In particular, Cloud Volumes ONTAP assists with lift and shift cloud migration. Download our free guide The 5 Phases for Enterprise Migration to AWS to learn more.

Cloud Volumes ONTAP also provides storage efficiency features, including thin provisioning, data compression, and deduplication, reducing the storage footprint and costs by up to 70%. Learn more about how Cloud Volumes ONTAP helps cost savings with these Cloud Volumes ONTAP Storage Efficiency Case Studies.