More about VMware Cloud

- Migrate from VMware to Azure: The Basics and a Quick Tutorial

- VMware Cloud Services: A New Option for Hybrid Cloud Management

- VMware Cloud: VMware at Your Service on AWS, Azure and GCP

- VMware Kubernetes: Running Kubernetes with vSphere and Tanzu

- VMware on AWS: Architecture and Service Options

- VMware on Google Cloud: A Deployment Roadmap

- VMware on Azure: One-Step Migration to the Cloud

- VMware Cloud Case Studies with Cloud Volumes ONTAP

- Enterprise Workloads with Cloud Volumes ONTAP on Google Cloud

- VMC on AWS Vs. Cloud Volumes ONTAP

- VMware Cloud on AWS: How Fujitsu Saves Millions Using Cloud Volumes ONTAP

Subscribe to our blog

Thanks for subscribing to the blog.

December 29, 2020

Topics: Cloud Volumes ONTAP ElementaryKubernetes

How Does VMware Run Kubernetes?

Kubernetes is the most popular open-source platform for managing container workloads, with a large community and tools ecosystem. It supports declarative configuration, powerful automation, and has a large and rapidly growing ecosystem.

For VMware administrators, Kubernetes is a new way to deploy applications and manage their lifecycle, which is gradually replacing bare-metal virtualization.

VMware is strongly focused on integrating its platforms and technologies with Kubernetes. There are two main flavors for running Kubernetes on VMware:

- Running Kubernetes on the traditional vSphere virtualization platform alongside regular virtual machines

- Creating a large-scale multi-cloud environment for containerized workloads using the VMware Tanzu framework

In this article, you will learn:

- Brief History of Virtualization

- VMware vSphere with Kubernetes

- VMware Tanzu Kubernetes Grid

- Kubernetes on VMware with NetApp Cloud Volumes ONTAP

Brief History of Virtualization

A brief introduction to the history of the various application delivery methods will help you understand the relevance of Kubernetes for modern VMware operations.

Traditional Deployment

Traditionally, applications and workloads were deployed directly to physical servers. This type of deployment is often inflexible, difficult to manage, and wastes resources because applications are limited to running on one system, regardless of the resources they actually utilize.

Virtualized Deployment

VMware ESXi adds a hypervisor abstraction layer that creates virtual machines, which emulate the functionality of standard physical servers. Each virtual machine is assigned its own resources and operating system, so you can separate the underlying hardware resources from the workloads running on them.

Containerized Deployment

Containers are similar to virtual machines, but they are lightweight and do not require an entire operating system to support it. This makes them more portable and flexible than virtual machines. The container directly accesses the operating system kernel of the host it is running on but has its own file system and resources.

Containers are gradually replacing virtual machines as the mechanism of choice for deploying dev/test environments and modern cloud-based applications. VMware has integrated its infrastructure with Kubernetes, to let you run containers alongside traditional virtual machines, and manage them using familiar VMware technology.

VMware vSphere with Kubernetes

In 2019 VMware announced Project Pacific, which was intended to integrate VMware vSphere with Kubernetes. From vSphere 7, the virtualization platform fully supports Kubernetes. It enables seamless management of clusters and containers using existing tools familiar to vSphere developers and administrators.

To a developer, vSphere with Kubernetes looks like a standard Kubernetes cluster. The declarative Kubernetes syntax can be used to define resources such as storage, network, scalability and availability. Standard Kubernetes syntax eliminates the need for developers to directly access or understand vSphere APIs or infrastructure.

vSphere administrators can use namespaces (used in Kubernetes for policy and resource management) to give developers control over security, resource consumption, and network functions for their Kubernetes clusters.

How Does vSphere with Kubernetes Work?

vSphere introduces the Kubernetes API for Kubernetes developers, which provides a cloud service experience similar to that of a public cloud, with a control plane based on the namespace entity, which is managed by administrators. This architecture enables orchestration and management or workloads in a consistent manner, regardless of their shape and form—container, virtual machine, or application.

Spherelet and vSphere Pod Service

vSphere makes the Kubernetes API directly accessible from the ESXi hypervisor, through a custom agent called Spherelet. The agent is based on Kubelet and enables the ESXi hypervisor to act as a local Kubernetes node that can connect to a Kubernetes cluster.

This makes it possible to host containers directly on the hypervisor without a separate instance of the Linux operating system. To enable this, vSphere has a new ESXi container runtime called CRX. From the perspective of the Kubernetes system, this is visible as a vSphere Pod Service.

Supervisor

The Supervisor is a special type of Kubernetes cluster that uses ESXi as a worker node instead of Linux. For this purpose, the Spherelet agent is integrated directly into the ESXi hypervisor. The Spherelet does not run on virtual machines, but directly on ESXi via vSphere Pods. Pods can utilize the ESXi hypervisor’s security, performance and high availability properties.

VMware Tanzu Kubernetes Grid

VMware Tanzu Kubernetes Grid Integrated Edition is a dedicated Kubernetes-first infrastructure solution for multi-cloud organizations. Its production-grade operational capabilities make it highly suitable for day 1 and day 2 support in large Kubernetes deployments. VMware Tanzu manages Kubernetes deployments across the stack, from the application to the infrastructure layer.

Tanzu key capabilities include:

- Multi-cloud—VMware Tanzu is a true multi-cloud platform that can be deployed on-premises, using vSphere (see the previous section) and on public cloud providers such as Google Cloud Platform (GCP), Amazon Web Services (AWS) and Microsoft Azure.

- High availability—VMware Tanzu improves availability for critical production workloads running on Kubernetes clusters. It supports both multi-AZ and multi-master topologies (with redundant copies of etc). Tanzu monitors health of virtual machines, heals failed VMs, and manages rolling Kubernetes updates across a large number of Kubernetes clusters with no downtime.

- Persistent storage—VMware Tanzu lets you create Kubernetes clusters for stateful applications, with improved support for persistency. Project Hatchway offers the vSphere Cloud Provider and container storage interface (CSI) Storage, which provides access within VMware to Kubernetes storage entities. VMware can enhance entities like Persistent Volumes and Persistent Volume Claims with enterprise-grade features like Storage Policy-Based Management (SPBM) and VMware vSAN.

Related content: read our in-depth guides about:

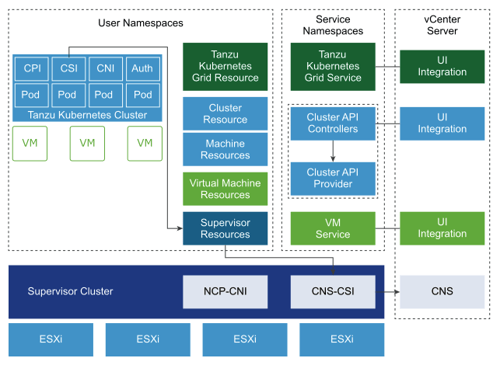

Tanzu Kubernetes Grid Service Components

The Tanzu Kubernetes Grid Service provides a three-tier controller to manage Kubernetes cluster lifecycle:

- Grid Service—provides clusters with the necessary components to integrate with resources available under the Supervisor Namespace.

- Cluster API—provides a Kubernetes-style declarative API for creating, configuring, and managing clusters.

- Virtual Machine Service—provides a Kubernetes-style declarative API for managing virtual machines and other resources in vSphere.

Source: VMware

Tanzu Kubernetes Cluster Components

A Tanzu Kubernetes cluster is composed of four primary components:

- Authentication webhook—validates user authentication tokens within the cluster, runs as a pod.

- Container Storage Interface (CSI) plugin—a partially virtualized CSI plugin that is integrated with Cloud Native Storage (CNS) services offered in the Supervisor Cluster.

- Container Network Interface (CNI) plugin—enables networking for Kubernetes pods.

- Cloud Provider Implementation—provides load balancer services for cluster resources.

Kubernetes on VMware with NetApp Cloud Volumes ONTAP

NetApp Cloud Volumes ONTAP, the leading enterprise-grade storage management solution, delivers secure, proven storage management services on AWS, Azure and Google Cloud. Cloud Volumes ONTAP supports up to a capacity of 368TB, and supports various use cases such as file services, databases, DevOps or any other enterprise workload, with a strong set of features including high availability, data protection, storage efficiencies, Kubernetes integration, and more.

In particular, Cloud Volumes ONTAP provides dynamic Kubernetes Persistent Volume provisioning for persistent storage requirements of containerized workloads.

Learn more about how Cloud Volumes ONTAP helps to address the challenges of VMware Cloud, and read here about our VMware Cloud Case Studies with Cloud Volumes ONTAP.