Subscribe to our blog

Thanks for subscribing to the blog.

November 23, 2017

Topics: Cloud Volumes ONTAP 7 minute read

Do you still rely on a central team to run analytical jobs on your data, or have you discovered the new self-service form of data analytics for enterprise businesses that AWS makes possible with Amazon Athena?

Amazon Athena is a serverless, interactive query service from Amazon which helps to analyze data stored in Amazon S3 using standard structured query language (SQL). It runs a direct query on structured, semi-structured, or unstructured data already stored in Amazon S3, without loading the data into Athena.

At its core, Athena uses Presto — an open-source (since 2013) in-memory distributed SQL query engine developed by Facebook. It is meant for running analytic queries against varied data sources.

The reliability and scalability of the Amazon S3 object store and Athena’s “as-a-service” model are giving a new shape to cloud analytics. In this article we’ll take a closer look at Amazon Athena and show you how it can transform the way you run analytics on cloud.

An Analytics Game Changer

Running big data analytics on-premises has always been a time-consuming and expensive approach. Starting from the provisioning of infrastructure to setting up data lakes, the process is quite complex.

Running big data analytics on-premises has always been a time-consuming and expensive approach. Starting from the provisioning of infrastructure to setting up data lakes, the process is quite complex.

Also, owing to the huge upfront investment it takes, small and medium enterprises have always been denied the ability to configure their own big data analytics setups.

Amazon Athena allows companies to sidestep all of that. For companies that make the shift to Amazon Athena, analytics isn’t the challenge that it has posed in the past.

Let’s evaluate 5 of Athena’s features to see how it’s become a game changer for analytics:

1. Serverless: Athena is serverless; that means you don’t need to set up an infrastructure or manage it. Plus, all the management activities, such as patch updating and scaling up or down with the user load, are handled by AWS.

2. Pay-Per-Use Model: With Athena’s pay-per-use model, you only need to pay for the queries that you run on the data already stored in Amazon S3. There is no separate cost involved for storage, apart from Amazon S3 storage cost. Currently, queries on Athena cost $5 per TB of data scanned. This price can further be reduced if you compress, partition or convert your data in columnar format, as queries thus fired will have less data to scan. Also, DDL statements such as create/alter/delete are exempted from any charges and cancelled queries will be charged per the amount of data scanned.

3. Easy to Query: Athena uses Presto as its interactive and powerful SQL query engine, which supports analytics on large amounts of data. This implies that you can analyze data stored in an Amazon S3 bucket with simple ANSI SQL, including joins, functions and arrays. Also, Athena supports queries for structured and unstructured data formats such as CSV, JSON or ORC.

4. Fast and Durable: Athena does not load any data since it directly works on data that is exposed via Amazon S3. Also, queries on any data set run is parallel, giving quick results to end users. Amazon Athena is highly available as AWS will automatically switch services to a different facility if the primary facility becomes unavailable. It also bears mentioning that Amazon S3, which Athena relies on for all its queries, has a durability of 99.999999999%.

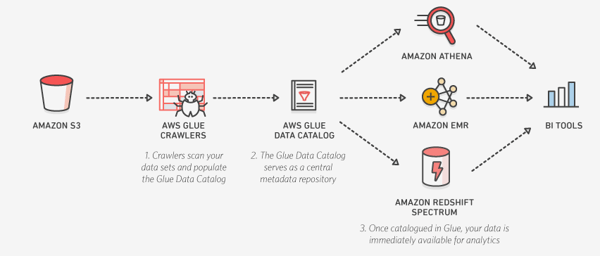

5. Integration with AWS Glue: AWS Glue is the ETL (Extract, Transform and Load) service provided by AWS. With out-of-the-box integration with Amazon Athena, AWS Glue helps make our lives even easier when it comes to analytics. As mentioned above, Athena doesn’t load any data. Instead, it uses a data catalog to store information about the data sets and tables already present in Amazon S3 buckets. This data catalog can be modified via DDL statements or the management console. But if you use AWS Glue along with Athena, AWS Glue crawlers can automatically infer data sets and populate the AWS Glue catalog. Not only this, but any changes to existing data can also be captured by the crawler and added to the catalog. Once created, Athena can refer to this catalog on the fly to execute any query.

Athena and AWS Glue catalog in action

Athena and AWS Glue catalog in action

Object Storage: The Right Choice for Big Data Analytics

Before we define object storage and explain why it is such a good format for big data, let’s take a moment to understand file and block storage.

File storage is an hierarchical way of organization in which users can locate any file by describing the right path for that file. The owner and size of the file are just some of details saved as metadata in the case of file storage.

On the other hand, block storage stores data as small chunks. In the case of an access request, block storage combines these chunks and shares the combined file as an output. Each chunk of data has a unique address which is auto-assigned, over which the end user has no control.

Mostly, block storage is used for performance-oriented applications, such as transactional databases.

Object storage is different. An object consists of data and metadata associated with that data. This metadata is highly customizable, and the end user has full control to carry out these customizations.

For example, let’s consider an X-ray object: Metadata for the X-ray can include a patient’s name, address, body part and injury details, making the file a lot more informative for doctors whenever they access the X-ray down the line. Also, these objects are stored in a flat address space, making object storage highly scalable and accessible.

In a nutshell, object storage is highly scalable and can carry a huge amount of metadata, making it an ideal fit for analytical workloads.

How NetApp Helps Run Analytics

In the past, having a big data setup for your enterprise meant huge investments in your data center. But with public clouds providing this solution as a service — of which Amazon Athena is one example — data analytics for enterprise businesses have become a lot more agile and affordable.

In addition to this huge step offered by the public cloud, NetApp offers solutions that can make analysis even easier.

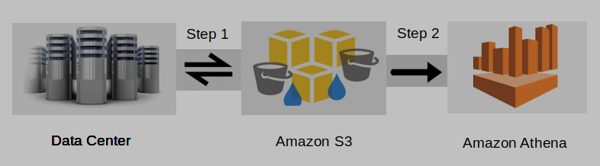

By providing a syncing service between your data center and Amazon S3 bucket, NetApp’s Cloud Sync works not only with NetApp storage appliances, but with any NFSv3/CIFS share. A typical end-to-end scenario can look something like the image pictured below:

Getting data from the data center to Athena with Cloud Sync.

Getting data from the data center to Athena with Cloud Sync.

The customer using NetApp Cloud Sync at the data center transfers data from there to an Amazon S3 bucket, at which point Athena can run queries on that data. Also, with Cloud Sync, results may be transferred back to your data center which will always be up to date.

NetApp Cloud Sync can be very useful for multiple use cases, including data migration to cloud, data tiering and data archiving. It is also efficient: Only the delta is transferred in every synchronization cycle, making updates faster; there is no need to repeat the complete transfer process, Cloud Sync can pick up from the last point it stopped. It is also secure: Data remains protected in your data center environment and is not transferred to NetApp’s domain.

Summary

Data has become the most important assets that a company owns, so gaining insights and extracting more out of your data are more important now than ever. With the public cloud providers offering service-based analytics, such as Amazon Athena, companies can get more insights without the expensive complications of home-built analytic tools.

Serverless and employing ANSI SQL, Amazon Athena makes data queries quick to set up, fast to run, and easy to use. The pay-per-use model also makes Athena affordable, much more so than running your own analytics.

Since it works with Amazon S3, it comes with unmatched durability, scalability, reliability and the power of object storage, the best-suited format for running analytics workloads.

NetApp is making Athena even more effective at delivering the insights that companies need from their data.

Find out more about how NetApp can help you in data analytics for enterprise businesses, data migration, data transfers and data synchronization using the Cloud Sync service.

Want to get started? Try out Cloud Volumes ONTAP today with a 30-day free trial.