More about AWS EBS

- Amazon EBS Elastic Volumes in Cloud Volumes ONTAP

- AWS EBS Multi-Attach Volumes and Cloud Volumes ONTAP iSCSI

- AWS EBS: A Complete Guide and Five Cool Functions You Should Start Using

- AWS Snapshot Automation for Backing Up EBS Volumes

- How to Clean Up Unused AWS EBS Volumes with A Lambda Function

- Boost your AWS EBS performance with Cloud Volumes ONTAP

- Are You Getting Everything You Can from AWS EBS Volumes?: Optimizing Your Storage Usage

- EBS Pricing and Performance: A Comparison with Amazon EFS and Amazon S3

- Cloning Amazon EBS Volumes: A Solution to the AWS EBS Cloning Problem

- The Largest Block Storage Volumes the Public Cloud Has to Offer: AWS EBS, Azure Disks, and More

- Storage Tiering between AWS EBS and Amazon S3 with NetApp Cloud Volumes ONTAP

- Lowering Disaster Recovery Costs by Tiering AWS EBS Data to Amazon S3

- 3 Tips for Optimizing AWS EBS Performance

- AWS Instance Store Volumes & Backing Up Ephemeral Storage to AWS EBS

- AWS EBS and S3: Object Storage Vs. Block Storage in the AWS Cloud

Subscribe to our blog

Thanks for subscribing to the blog.

October 23, 2017

Topics: Cloud Volumes ONTAP AzureAWSAdvanced7 minute read

With the ever-growing amount of data that companies generate today, you can never have enough storage space. When the time comes to add new disks, not only do you have to consider the large upfront costs for large block storage volumes, such as AWS EBS, but you also have to think about delivery time, available rack space, installation and setup of the physical disks.

Public cloud providers have completely changed all this, allowing us to provision volumes with just a few clicks, without upfront costs, and at competitive prices.

As the needs of customers have grown, so have the types of block storage offered by the public cloud service providers. Volumes are getting larger, and new volume types of various categories and performance capabilities are being introduced all the time.

In this article we will take a look at the largest block storage volumes offered by AWS and Azure, examine the best ways to use them, and some of the disadvantages that come with such large volumes.

AWS Block Storage: Amazon EBS

Amazon Elastic Block Store (Amazon EBS) is Amazon’s persistent block level storage solution to be used with Amazon EC2 instances. It is one of several storage options provided by AWS.

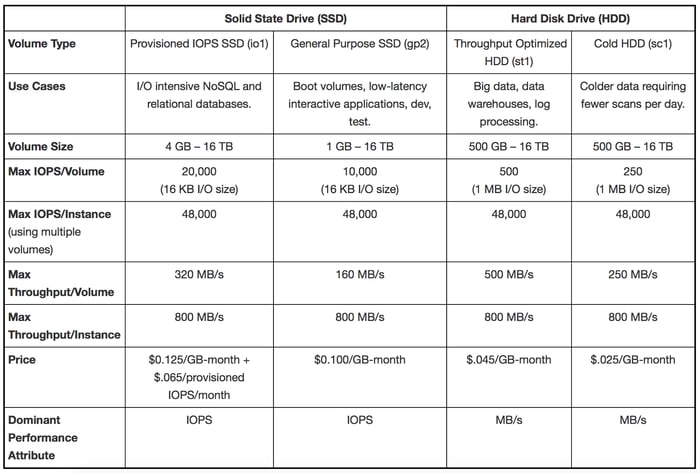

There are two main categories of Amazon EBS:

- SSD

- HDD.

These volumes are offered in different types and sizes, but they also allow for encryption, snapshot creation, and even elasticity — a new feature introduced in one of the latest updates.

Elasticity allows users to modify volume size, performance, or even volume type, all while the volume is in use. With AWS, volumes can be as large as 16TB for all volume types (except previous generation Magnetic volumes, which are rarely used nowadays).

When to Use

If you have vast amounts of data that need to be stored on a filesystem that you will only access occasionally, and speed is not of your concern, getting a large Cold HDD (sc1) volume is a great and very cheap option. Being priced at $.025/GB-month it actually rivals the cost of Amazon S3 Standard ($.025/GB-month for the first 50TB). If you need more than 16TB of disk space, consider using RAID.

For situations that require a high-throughput sequential workload such as big data or log processing, Amazon’s Throughput Optimized HDD (st1) is an easy choice. Priced at $.045/GB-month, while offering max throughput of 500 MB/s, this low-cost, high-capacity/high-performance volume is a great option for Hadoop clusters, especially with the 16TB size, as Hadoop is known to consume large amounts of disk space.

Since both st1 and sc1 are optimized for sequential I/O workloads, they are also ideal for deploying Apache Kafka on AWS. They provide the reliability that Kafka requires, while maintaining a very low cost per GB, compared with other Amazon EBS options.

If you have I/O-intensive workloads, Provisioned IOPS SSD (io1) provides IOPS as high as 20,000. With a cost of $.125/GB-month plus $.065/PIOPS-month, it is by far the most expensive volume AWS has to offer. But for databases with large workloads or high-performance, mission-critical applications, io1 provides the necessary and sustained performance they require. There are additional techniques you can employ to optimize AWS EBS performance.

To get a clear view of the Amazon EBS workload of your large volumes, you can benchmark them using fio tool to monitor I/O performance, or alternatively you can use Amazon CloudWatch and look at the Amazon EBS performance metrics.

If you are unsure which volume to use, or if none of the volumes described above seem to fit your needs, you can always fall back to gp2, as it has a great balance of price and performance.

Here is the table showing specifications and cost of Amazon EBS volumes (Magnetic volume is not included):

Drawbacks

Having a single volume of 16TB can be useful, but can also have its downsides.

Consider what it takes to do disaster recovery backups for such large volumes. Creating an initial snapshot of a 16TB volume will take a lot of time, and after that there is transferring the snapshot to another region, which will cost you both more time and more money due to the sheer quantity of data being moved.

Also, consider restoration of those snapshots. When you first access each block of data on a new Amazon EBS volume that was restored from a snapshot, there can be a significant increase in latency—up to 50%. One solution to latency issues it to to pre-warm your volumes to make sure your application doesn’t run into performance issues.

You can do this by using tools such as dd and fio on both Linux and Windows. But pre-warming a large volume can take a very long time, so keep that in mind as it can impact your SLA.

As you can see, very large volumes can produce additional overhead, aside from additional cost, so make sure to always choose an optimal volume size for your instances.

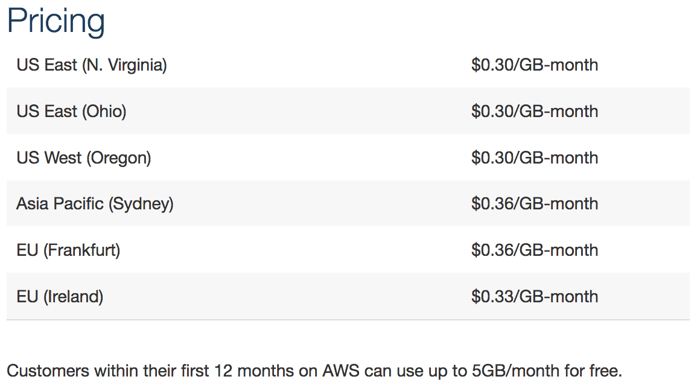

An AWS Alternative: Amazon EFS

One alternative to getting a large volume is to use AWS Elastic File System.

Amazon EFS is a scalable file storage that works like an NFS mount (using Amazon EFS with Amazon EC2 instances running Windows is not supported). With this service you only pay for storage used, while bandwidth and mounts are free.

Amazon EFS storage capacity is elastic, so it changes as you add or delete files, allowing you to have as much storage as you need, when you need it. Amazon EFS is best used for big data and analytics, home directories, and media processing workflows.

The drawbacks to Amazon EFS are that individual file writes can have noticeable latency and, if used for web servers, Amazon EFS may cause a high response time. Also, this service is not yet available in all regions, which obviously will affect a lot of users.

Below is the price table for Amazon EFS:

Azure Block Storage: Managed Disks

Azure’s managed disks are the recommended option to be used with Azure virtual machines (though you can also opt for unmanaged disks).

Managed disks handle storage for you, and offer features such as better reliability for Availability Sets (logical groupings used for tiering VMs), Azure Backup Service (allowing for backups in a different region), and Role-Based Access Control (to allow users specific permissions for a managed disk).

Two types of managed disks are currently being offered: Premium (SSD) and Standard (HDD), with the largest volumes P50 (high-performance, low-latency disk to be used for I/O-intensive workloads) and S50 (best used for dev/test or other workloads that are not sensitive to performance variability), both of which can be up to 4TB in size.

Drawbacks

Disk size is relatively low and you would have to use either RAID or Storage Spaces (modern technology similar to RAID, designed for scalability and performance) if you needed more space.

At the moment Azure doesn’t support incremental snapshots for managed disks. However, that feature is planned to be implemented in the future. In addition, when it comes to shrinking disks, that option is currently not possible with managed disks.

Unmanaged disks are also an option, and can go as high as 8TB in size (P60 disk type), and they do support incremental snapshots, but choosing an unmanaged disk would prevent you from using managed disks in the same Availability Set, as you can either use one or the other, you can’t mix and match between the two.

When to Use

The advantage of using larger volumes on Azure is that they allow you to save money when your workloads are not too demanding, but they do require a lot of storage space. To have more disks on a single machine you have to provision a larger machine, as the number of attached disks allowed increases with machine size.

With Azure’s latest 4TB-size disks you can use a smaller, and much cheaper machine, compared with using 1TB disks where we would need a much larger machine to support more disks for the same combined disk space.

Enhancing Block Storage with Cloud Volumes ONTAP

Improvements to existing services are being made and new services and features are being introduced on a regular basis by both AWS and Azure, but users who need to get more from their block storage service on either platform right now can turn to NetApp Cloud Volumes ONTAP.

Cloud Volumes ONTAP is a data management layer that runs as an instance on top of Amazon EBS, Azure disks, or even Google Cloud Persistent Disk. Through its single-pane Cloud Manager GUI, Cloud Volumes ONTAP allows users to control their entire storage deployment, reduce costs with cloud data storage cost-cutting storage efficiencies, and streamline the dev/test pipeline with IaC automation and zero-cost full-volume data cloning, and much more.