Subscribe to our blog

Thanks for subscribing to the blog.

March 1, 2017

Topics: Cloud Volumes ONTAP Cloud Storage6 minute read

If you’re reading this article, it’s safe to say you know a bit about the cloud, AWS, and the reasons why cloud-based storage is gaining popularity all around the world and in every business vertical out there.

The cloud provides scalable, durable and highly available storage solutions.

One of the popular offerings from cloud providers is Network Attached Storage, or NAS, on the cloud. The cloud provider storage is accessible over the Internet and ideal for backup and archiving purposes. Cloud-based NAS comes with the advantage of no hardware failures and high availability.

However, if performance and security are concerns, some organizations prefer to go with local NAS, as well. NAS on the cloud can be used for scalable storage needs, backup, DR, archiving, and for shareable file system (AWS EFS) or for enterprise application delivery.

An effective NAS will always be able to leverage RAID with data redundancy. To achieve RAID, you can combine multiple block storage services for performance or high availability.

But how do we create RAID?

Don’t worry. We’ve got you covered.

A Step-by-Step Guide: Creating RAID with EBS Using Ubuntu 14.04

Step 1:

Collect the information about the AWS running the EC2 instance:

- Instance ID

- Region (example: us-east-1)

- Access key ID

- Secret access key

- Elastic IP address

Step 2:

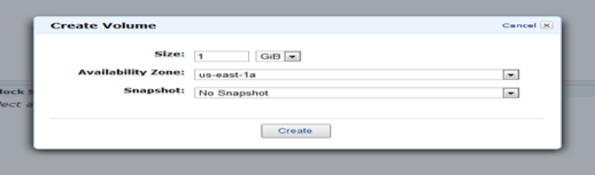

Create (provision) the volumes using Amazon Management Console

Step 3:

Repeat Step 2 to create another EBS Volume

Step 4:

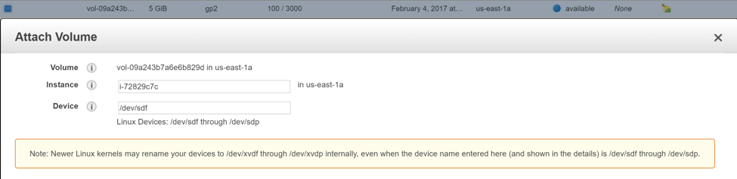

Attach the EC2 instance to the EBS:

- Click the b button and find the relevant EC2 instance

- Make sure you note the device name (example: /dev/sdm) assigned to the volume

- Repeat this step for each volume you created

Step 5:

- Log in via the SSH, then mount the EBS volume.

- For setting up RAID 0, we need the Ubuntu “mdadm” utility.

- Install the raid driver and the xfs file system using the following command:

$ sudo apt-get install -y mdadm xfsprogs

Step 6:

- Create the RAID drive as /dev/md0 using the two attached disks /dev/sdm and /dev/sdn.

- We set the chunk size to 256.

$ sudo mdadm --create /dev/md0 --level 0 --chunk=256 --metadata=1.1 --raid-devices=2 /dev/sdm /dev/sdn

Step 7:

- Run this command to add the devices into the mdadm.conf file so the drive configuration persists:

$ echo DEVICE /dev/sdm /dev/sdn | sudo tee /etc/mdadm/mdadm.conf

~ sudo mdadm --detail --scan | sudo tee -a /etc/mdadm/mdadm.conf

Step 8:

- Construct the xfs file system on the /dev/md0 drive:

$ sudo mkfs.xfs /dev/md0

Step 9:

- Next, add the /dev/md0 drive to the file systems table for auto-mounting on reboot:

$ echo "/dev/md0 /raiddrive xfs noatime 0 0" | sudo tee -a /etc/fstab

Step 10:

- Now, we need to mount and format the RAID drive:

$ sudo mkdir /raiddrive

$ sudo mount /raiddrive

Step 11:

- The following command sets the read ahead buffer to 64K. This will improve the performance of EBS drives in the case of large sequential I/Os.

$ sudo blockdev --setra 65536 /dev/md0

Step 12:

- Verify the drive is operating:

$ df -h /raiddrive

The Challenges with NAS Using EBS

Now, after understanding how to create RAID, it’s also important to know what challenges this might impose. It can be easily configured in SAN, but configuring NAS is trickier and poses some difficulties worth mentioning.

1. Scalability

Although NAS devices can scale to terabytes of storage capacity, once the capacity is exhausted the only way to expand it is to add additional devices. This can cause additional problems when data center real estate is at a premium.

Once a NAS device is fully populated, including external storage enclosures, the only remaining scaling option is to buy another system.

In the cloud, this is addressed by AWS’s offering of virtually unlimited storage.

2. Data Loss and Recovery

The major drawback of using the NAS device is that it mainly runs on Linux. If one drive fails, all data in a RAID 0 configuration is lost. Therefore, it should not be used for mission-critical systems.

Restoring the data in case of disk failure, may take a day or longer, depending on the load on the array and the speed of the controller. During recovery, if another disk fails, the data might be lost.

3. Network Bandwidth

At the end of the day, NAS appliances are going to share the network with their computing counterparts, hence, the NAS solution consumes bandwidth from the TCP/IP networks.

On top of that, the performance of the remotely hosted NAS device will depend upon the amount of bandwidth available for Wide Area Networks, and again the bandwidth is shared with computing devices. Therefore, WAN optimization needs to be performed for the remote deployment of NAS solutions in limited bandwidth scenarios.

To address this problem, it is recommended that the network architecture adhere to standard established topology and follow the QoS.

In this design, you can provide different priorities in network traffic to different applications thus designing your network switches according to throughout need. Also, use a Network Management System or tool, such as Cacti, sFlow or Netflow, to have the complete view of performance and congestion in the network.

4. Data Duplication

Generally, NAS is implemented with RAID 1 for high availability.

The main disadvantage, however, is effective storage capacity as it is only half of the total drive capacity because all data gets written twice.

Software RAID 1 solutions do not always allow a hot swap of a failed drive. That means the failed drive can only be replaced after powering down the computer it is attached to. For servers that are used simultaneously by many people, this may not be suitable. Such systems typically use hardware controllers that do support hot swapping.

For techniques like Continuous Data Protection or taking frequent Disk Snapshots for backup, block-level storage, for example, with data de-duplication as available with SAN, might be more efficient than the NAS.

To overcome the above limitations, the AWS offers Elastic File System (EFS), which manages NAS from AWS.

AWS Elastic File System

When you want to make common file storage accessible by more than one EC2 instance, you can use the AWS Elastic File System (EFS) service.

AWS EFS is a managed NAS offering from AWS. It delivers a file system interface with standard, file-system access semantics for Amazon EC2 instances. EFS offerings are distributed across an unconstrained number of storage servers, allowing file systems to scale elastically to petabyte-scale and permitting en-masse parallel access from the Amazon EC2 instance.

Amazon EFS capacity grows and shrinks automatically, providing high availability throughout with consistently low latencies. The Amazon EFS is ideal for Big Data analytics, media processing workflows, content management, web serving, home directories, and network file systems (which means it may have a bigger latency, but it can be shared across several instances; even between regions). It is expensive when compared to EBS and yet lower in performance.

It should be noted that while the Amazon EFS supports both NFSv4.1 and NFSv4.0 (but not NFSv2 or NFSv3), there are features that are not supported, including pNFS, mandatory locking, client delegation or callbacks of any type, access control lists (ACL) among others.

For a full list of the non supported features, see here. It’s Also valuable to add that Amazon EFS is only available in 4 AWS regions - North Virginia, Ohio, Oregon and Ireland.

Takeaway: Leveraging AWS Marketplace 3rd Party Solutions

As we discussed, the problems that we have with tools we’ve shown is that they are not always supported, meaning that not every user can make use of these tools. Enter 3rd party solutions: designed to bridge the compatibility gap. Since they are offered from known vendors and can be found in the Amazon marketplace, they’re reliable and trustworthy.

For example, If you’re in need of an NFS v3 solution that works in all AWS regions, consider looking into NetApp Cloud Volumes ONTAP Cloud (formerly ONTAP Cloud). It offers support for all NFS versions, and it also offers storage efficiencies like Data Deduplication, and many more. Additionally, Cloud Volumes ONTAP supports all versions of CIFS and offers iSCSI support as well.

Want to get started? Try out Cloud Volumes ONTAP today with a 30-day free trial.