- Product

- Solutions

- Resources

LEARN

- Cloud Storage

- IaaS

- DevOps

CALCULATORS

- TCO Azure

- TCO AWS

- TCO Google Cloud

- TCO Cloud Tiering

- TCO Cloud Backup

- Cloud Volumes ONTAP Sizer

- Azure NetApp Files Performance

- Cloud Insights ROI Calculator

- AVS/ANF TCO Estimator

- GCVE/CVS TCO Estimator

- VMC+FSx for ONTAP

- NetApp Keystone STaaS Cost Calculator

BENCHMARKS

- Azure NetApp Files

- CVS AWS

- CVS Google Cloud

- Pricing

- Blog

TOPIC

- AWS

- Azure

- Google Cloud

- Data Protection

- Kubernetes

- General

- Help Center

- Get Started

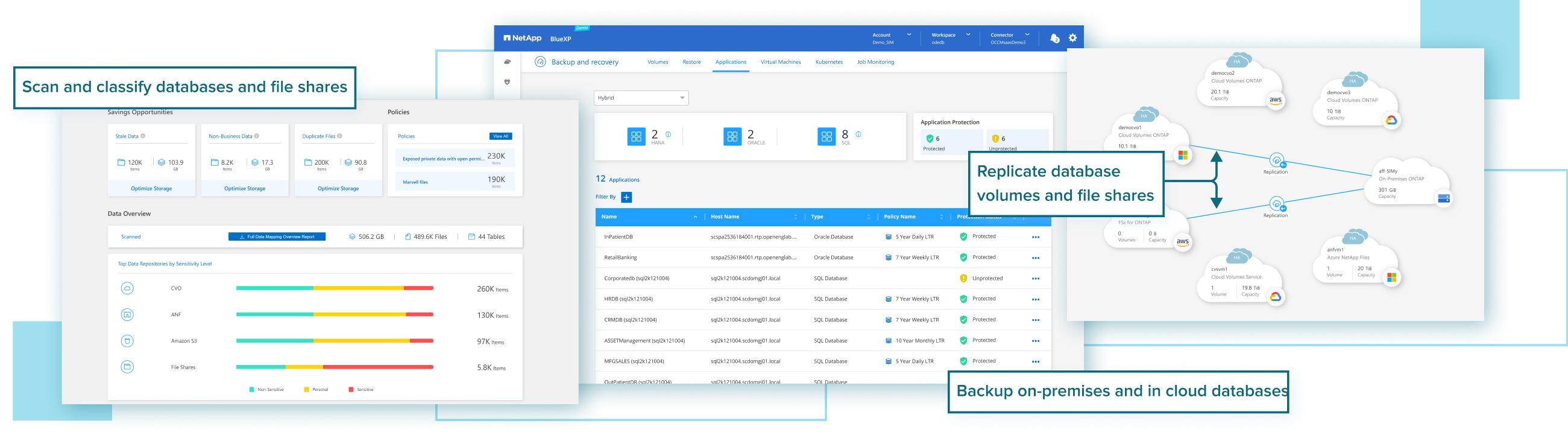

Enterprises are looking to migrate mission-critical databases to the public cloud, but they don’t want to lose the enterprise-grade data management capabilities, reliability, cost performance, and security they had on-prem.

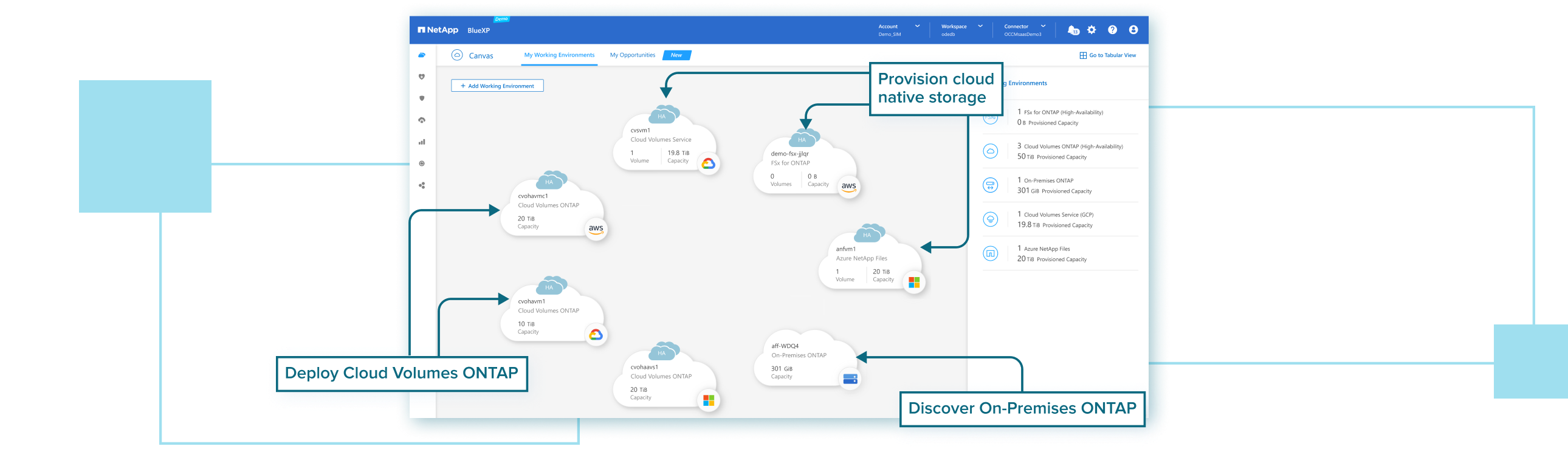

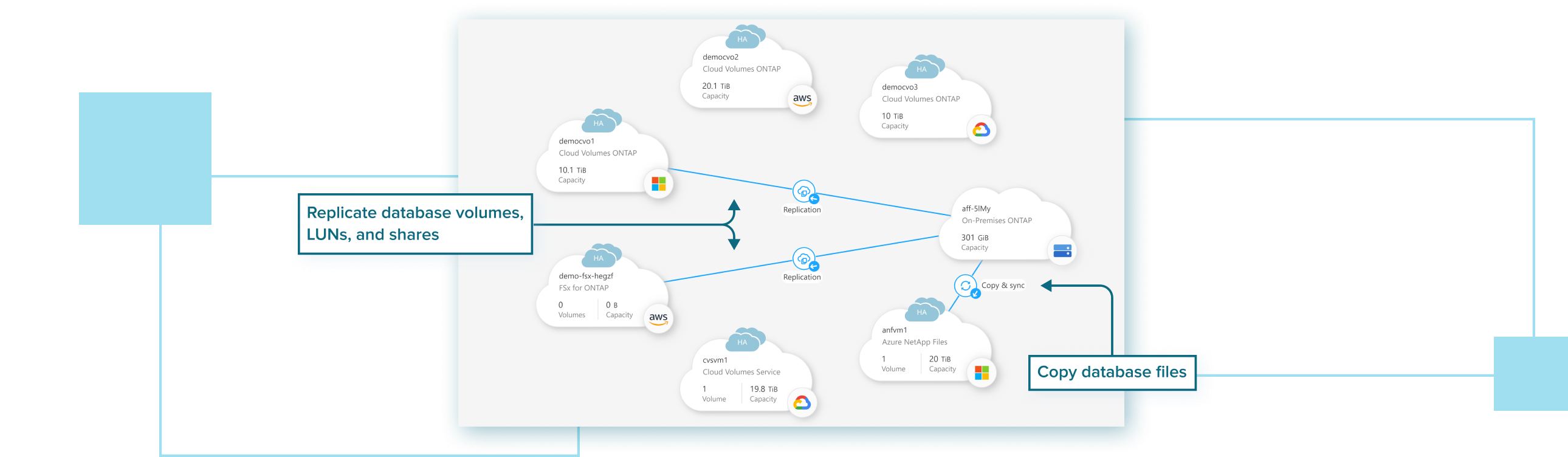

BlueXP lets you centrally provision and manage a highly available, performant, and cost-efficient cloud-based storage infrastructure for Oracle, Microsoft SQL Server, and SAP HANA databases without any trade-offs.