More about AWS Database

- AWS PostgreSQL: Managed or Self-Managed?

- MySQL Database Migration: Amazon EC2-Hosted vs. Amazon RDS

- AWS MySQL: MySQL as a Service vs. Self Managed in the Cloud

- Database Case Studies with Cloud Volumes ONTAP

- Amazon DocumentDB: Basics and Best Practices

- AWS NoSQL: Choosing the Best Option for You

- AWS Database as a Service: 8 Ways to Manage Databases in AWS

- AWS Database Migration Service: Copy-Paste Your Database to Amazon

- AWS Oracle RDS: Running Your First Oracle Database on Amazon

- DynamoDB Pricing: How to Optimize Usage and Reduce Costs

- AWS Database Services: Finding the Right Database for You

- Overcome Amazon RDS Instance Size Limits with Data Tiering to Amazon S3

Subscribe to our blog

Thanks for subscribing to the blog.

June 14, 2018

Topics: Cloud Volumes ONTAP Data TieringAWSDatabaseAdvanced7 minute read

When setting up an enterprise-grade AWS database, there are multiple challenges to deal with, including the system’s performance, agility, security and compliance, data retention, and cost, just to name a few.

Given that all of these challenges have to be addressed with a budget in mind, you need to make sure that your design architecture is not just highly performant but also cost-efficient. The best way to do that is to understand your storage requirements so you can find the solution that provides the best capacity and performance you need.

Cloud storage on demand helps organizations deal with the already-huge and ever-growing database storage requirements. You can now provision Amazon RDS instance sizes of up to 16 TB for your database. That size may be good enough for many databases, but the costs may be expensive for some types of databases, such as OLTP and OLAP environments, which have different configurations.

An alternative option is to host your database using Amazon EC2 instances, and then to leverage Cloud Volumes ONTAP data tiering to move infrequently-used data to inexpensive Amazon S3 storage without impacting performance.

In this article we’ll look at how Amazon RDS instance size limits make a good case for using Amazon EC2-hosted databases, and show how storage tiering with Cloud Volumes ONTAP can help you avoid reaching capacity and keep costs low.

AWS Databases: The Difference Between RDS and EC2

Amazon RDS is the primary offering for AWS database services. Even though an AWS RDS database provides you with easy way to quickly set up and run enterprise-class databases, you will still need to worry about storage handling and the AWS RDS costs associated with it because of the database’s complex and ever-growing storage requirements.

Amazon RDS instance sizes have a max limit of 16 TB; but prior to November 2017, the Amazon RDS instance size limit was only 4-6 TB. Such limits can be easily crossed if you have not properly estimated your rate of data growth. Though both automated and manual AWS RDS backups are free no matter the size of your database, you will incur costs if your backup retention period is long, or if you have a large number of backups due to compliance requirements.

Provisioning storage for a database needs to be done properly in order to avoid disrupting normal business operations. When a company is growing, its data might increase for a number of different reasons, such as servicing new business functions, preserving sources of data locally to improve performance, meeting compliance and data protection regulations that require longer backup retention periods, and more. When data grows so much the Amazon RDS storage limit is easily reached, and it brings the database to a standstill, which will force the customer to rethink their database engine.

One option to avoid this issue of Amazon RDS sizes is to move to another engine that supports a higher storage limit. But even in that situation, there is no guarantee that the company won’t reach a new storage limit in the future. Such a move might also require a fix to the application code and database code, which could require a lot resources and increased costs due to migration. There is another option: Amazon EC2.

Running and managing your database on Amazon EC2 may not be as easy as running it on Amazon RDS since your team will need to manage the majority of the configuration and setup required, but by using Amazon EC2 instances you will never have to deal with the storage limit. You can add as many instances as your database can support, and you can also choose different disk types based on performance and IO throughput, again without a limit.

Using different types of storage with Amazon EC2 instances does open up another requirement. You will not want to pay premium storage rates for backups and archives. In the next section we’ll discuss how that can be avoided using Cloud Volumes ONTAP for data tiering to Amazon S3.

Tiered Storage with Amazon S3

To avoid hitting database capacity limits, you need an Amazon RDS alternative to manage the lifecycle of your company’s data. You can keep all your DR, backup, and snapshot data on Amazon S3, while your main workloads and AWS EC2 storage is Amazon EBS, but the challenge is that the database synchronization and management between Amazon S3 and Amazon EC2 isn’t automatic; hence using Amazon S3 for storing your database may not seem feasible without help.

For this, NetApp’s Cloud Volumes ONTAP for AWS storage offers data tiering to Amazon S3. Amazon S3 storage costs less so this option gives you a way to avoid paying high costs for infrequently-used data on performant Amazon EBS disks by allowing you to store infrequently used data on Amazon S3.

How does Amazon S3 help in storage tiering? When you establish storage for your database, you then need to consider the lifecycle of the data. The hot data that needs a high IO throughput should go on fast storage types such as Amazon EBS. But if your cold and DR-related data is stored on the same performant storage drives, you’ll wind up paying a lot to store data that is not used frequently. By leveraging Amazon S3, with costs as low as $0.023 per GB/month, you have a storage format that can act as the capacity tier. You can then have all your backups and snapshots on Amazon S3 while your active data is on Amazon EBS.

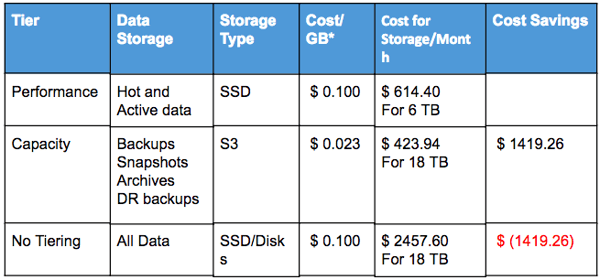

Using Amazon S3 as a capacity tier, your storage can grow at a very low cost as compared to costs on Amazon EBS. To see how having two tiers of storage for your database can make a difference, let’s look at some numbers below. In this example we’ll use a typical, full-grown database of 6144 GB in size:

*Note: Calculation based on US East (Ohio) rates

The table above assumes that the capacity tier will be three times the size of the actual database storage. This is because you may have to hold large amounts of backup data for long periods of time, which may require you to have an even larger capacity tier.

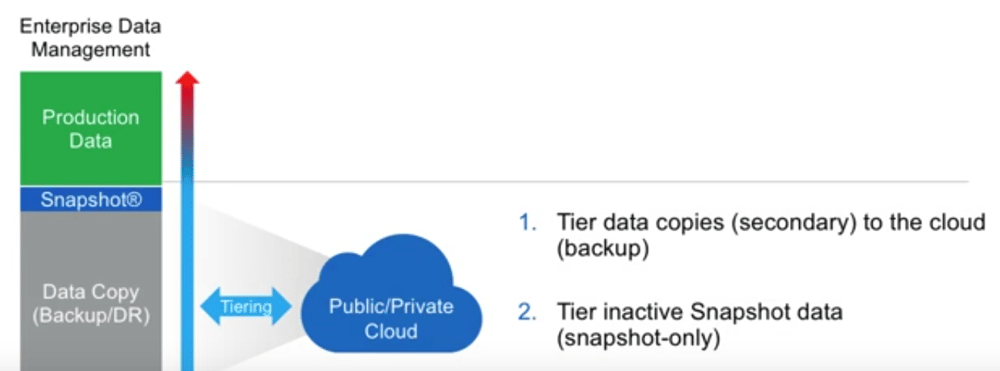

The screenshot below shows a typical setup of storage tiers with Cloud Volumes ONTAP. When you set up your enterprise data storage from the Cloud Manager management console, Cloud Volumes ONTAP will create a single bucket on Amazon S3 for all your capacity needs and a performance tier on Amazon EBS, using either HDDs or SSDs.

Cloud Volumes ONTAP storage tiering is automatic, which means that your snapshot backups and secondary backups will be automatically pushed to the Amazon S3 bucket from the Amazon EBS storage. If the backup data is required later on, it will be automatically pulled back from Amazon S3 to your Amazon EBS performance tier. Cloud Volumes ONTAP handles movement of snapshots and secondary DR copies. It identifies when snapshots should be moved to the Amazon S3 bucket or pull back DR copies when the DR requires it to be restored automatically.

Cloud Volumes ONTAP’s capabilities are not just limited to data tiering, but also include features that help you further reduce costs and efficiently manage all your enterprise storage needs. You can avoid pre-allocation of storage capacity per database instance with its thin provisioning feature, dynamically allocating storage from a single storage pool. Cloud Volumes ONTAP’s data compression, deduplication, and compaction can further reduce the size your backup up to 50%. NetApp’s FlexClone technology leverages efficient snapshot technology to create zero-capacity cloned volumes instantly.

Summary

Your database coming to a standstill because of under-provisioned storage or reaching maximum Amazon RDS size limits can severely impact a business. In the cloud era when you can scale your enterprise databases to an unlimited size, you should not put limit to your capabilities for growth. Tiering storage to Amazon S3 with ONTAP Cloud for AWS is great way to reduce AWS storage costs for databases hosted on Amazon EC2 instances is a smart alternative to the limited storage capacity available on Amazon RDS.