More about AWS EBS

- Amazon EBS Elastic Volumes in Cloud Volumes ONTAP

- AWS EBS Multi-Attach Volumes and Cloud Volumes ONTAP iSCSI

- AWS EBS: A Complete Guide and Five Cool Functions You Should Start Using

- AWS Snapshot Automation for Backing Up EBS Volumes

- How to Clean Up Unused AWS EBS Volumes with A Lambda Function

- Boost your AWS EBS performance with Cloud Volumes ONTAP

- Are You Getting Everything You Can from AWS EBS Volumes?: Optimizing Your Storage Usage

- EBS Pricing and Performance: A Comparison with Amazon EFS and Amazon S3

- Cloning Amazon EBS Volumes: A Solution to the AWS EBS Cloning Problem

- The Largest Block Storage Volumes the Public Cloud Has to Offer: AWS EBS, Azure Disks, and More

- Storage Tiering between AWS EBS and Amazon S3 with NetApp Cloud Volumes ONTAP

- Lowering Disaster Recovery Costs by Tiering AWS EBS Data to Amazon S3

- 3 Tips for Optimizing AWS EBS Performance

- AWS Instance Store Volumes & Backing Up Ephemeral Storage to AWS EBS

- AWS EBS and S3: Object Storage Vs. Block Storage in the AWS Cloud

Subscribe to our blog

Thanks for subscribing to the blog.

September 20, 2020

Topics: Cloud Volumes ONTAP Cloud StorageData ProtectionAWSfeaturedAdvanced22 minute read

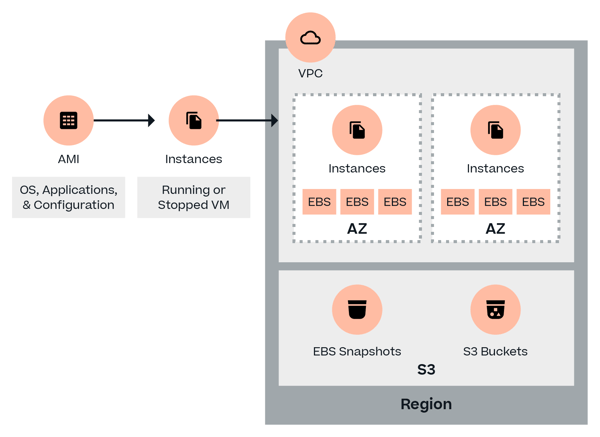

To successfully meet the challenge of storing data in the cloud using AWS, IT professionals need to familiarize themselves with the management of Amazon Elastic Block Store (Amazon EBS) volumes and snapshots. Amazon EBS volumes provide persistent storage for compute instances in Amazon’s hugely popular Infrastructure as a Service (IaaS) offering, Elastic Compute Cloud (EC2).

In this post, you’ll learn:

- What is AWS EBS

- How to perform common EBS operation

- Five highly useful EBS functions you should start using

- How Cloud Volumes ONTAP can let you take faster snapshots

What Is EBS?

Amazon Elastic Block Store (AWS EBS) is a raw block-level storage service designed to be used with Amazon EC2 instances. When mounted to Amazon EC2 instances, Amazon EBS volumes can be used like any other raw block device: they can be formatted with a specific file system, host operating systems and applications, and have snapshots or clones made from them.

Every Amazon EBS volume that is provisioned will be automatically replicated to other storage devices in the same Availability Zone inside the AWS region to offer redundancy and high availability (guaranteed 99.999% by Amazon). AWS also offers seamless encryption of data at rest (both boot and data volumes) using Amazon-managed keys or keys customers create through Amazon Key Management Service (KMS).

What is AWS EBS? 2 Minute Video

Two Types of AWS EBS Volumes

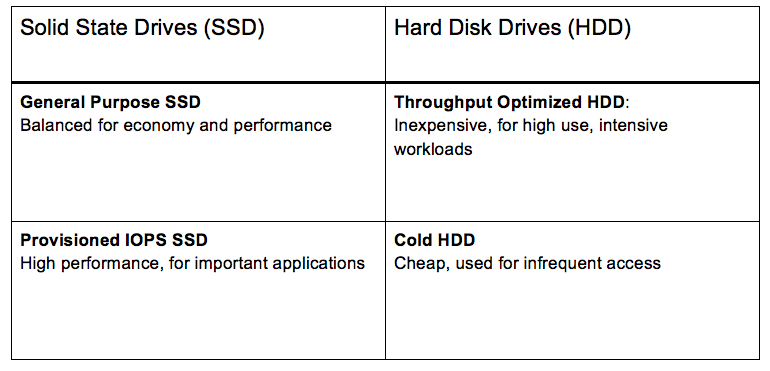

There are two Amazon EBS volume type categories: SSD-backed volumes and HDD-backed volumes (see official Amazon documentation).

SSD-backed volumes are optimized for transactional workloads, where the volume performs a lot of small read/write operations. The performance of such volumes is measured in IOPS (input/output operations per second).

HDD-backed volumes are designed for large sequential workloads where throughput is much more important (and the performance is measured with MiB/s). Each category has two subsets.

Sub-types of SSD-based EBS Volumes

General Purpose SSD (gp2)

Balanced for price and performance and recommended for most use cases, general purpose SSD is a good choice for boot volumes, applications in development and testing environments, and even for low latency production apps that don’t require high IOPS. Baseline performance is directly correlated with volume size, since customers receive three IOPS per GB of volume size.

Volumes also have I/O “credits” which represent the available bandwidth that the volume can use to burst up to a higher IOPS value over a certain period of time. Credits are accumulated over time (the larger the initial volume, the more credits it will earn over time) up to a maximum of 3,000 IOPS, that can be saved for whenever the Amazon EBS volumes may need it. When a credit is depleted, the Amazon EBS volume goes back to its initial baseline performance rate. Sizes of gp2 volumes can vary from 1 GiB to 16 TiB, while maximum throughput is capped at 160 MiB/s.

Provisioned IOPS SSD (io1)

The SSD volume type to use for critical production applications and databases that need the high performance (up to 32,000 IOPS) Amazon EBS storage. Instead of credits, io1 volumes can provision a desired IOPS value, with a maximum ratio of 50:1. That means a performance rate of 5,000 IOPS can be set when provisioning a 100 GiB volume. All volumes bigger than 400 GiB can provision a maximum of 32,000 IOPS. Io1 volume sizes vary from 4 GiB to 16 TiB, while throughput is maxed at 500 MiB/s.

AWS guarantees customers that their Provisioned IOPS SSD volumes will operate at maximum 10% of loss of performance at 99.9% SLA on a yearly basis. The recommended practice is to maintain at least a 2:1 ratio between IOPS and volume size.

Sub-types of HDD-based EBS Volumes

Throughput Optimized HDD (st1)

Throughput Optimized HDD is designed for applications that require larger storage and bigger throughput, such as big data or data warehousing, where IOPS is not that relevant. St1 volumes, much like SSD gp2, use a burst model, where the initial baseline throughput is tied to the volume size, and credits are accumulated over time.

Bigger volumes have higher baseline throughput and will acquire credits faster over time. Maximum throughput is capped at 500 MiB/s, sizes vary from starting 500 GiB to 16 TiB, and volumes can hold 1 TiB in credits per TiB (which is more than 30 minutes of workload under highest throughput).

Cold HDD (sc1)

Cold HDD (sc1) is a magnetic storage format suitable for scenarios where storing data at low cost is the main criteria. Sizes vary from 500 GiB to 16 TiB, throughput can reach 250 MiB/s, and a similar burst model is used as with st1 volumes, but the credits fill at a slower value (12 MiB/s opposing to 40 MiB/s with st1 volumes). Both st1 and sc1 storage types cannot be used as a boot volume, so it is recommended to use gp2 volumes for that purpose.

4 Common EBS Operations

We’ll cover the following common operations:

- Creating a new EBS volume

- Attaching an EBS volume to an EC2 instance

- Creating a snapshot from an EBS volume

- Copying EBS volumes between regions

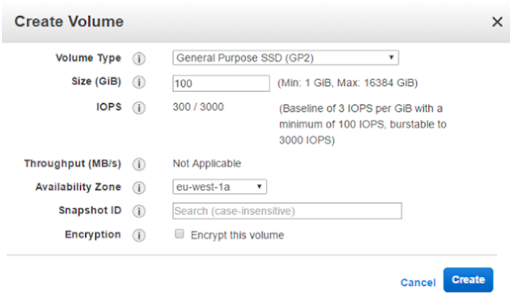

1. Creating a New EBS Volume

To create a new volume,

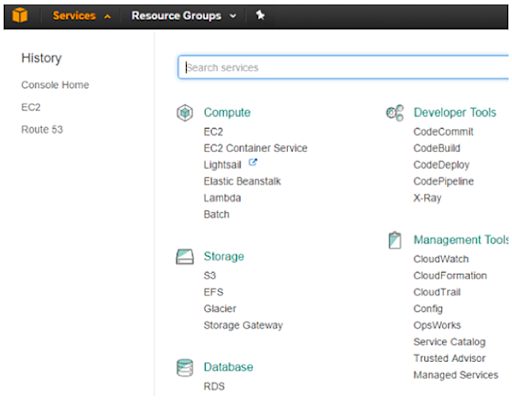

1. Login to an AWS account and select the desired region in the top right corner of the browser. Click on “Services” at the top left corner of the screen and select “EC2.”

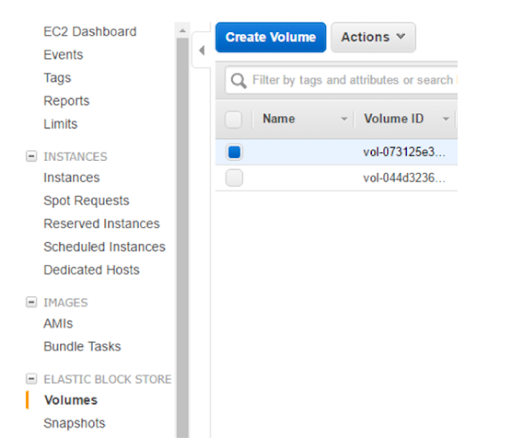

2. In the left navigation pane, under “EC2 Dashboard,” locate the “Elastic Block Store” subsection and select “Volumes.” A dialog displays. Click “Create Volume”.

3. In the pop-up window, customize the volume (type, size, IOPS or throughput), select the desired Availability Zone, and decide whether or not to use encryption. Click “Create”.

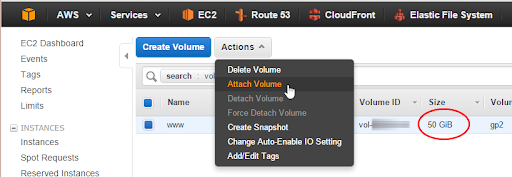

2. Attach an EBS Volume to an EC2 Instance

To attach an EBS volume to an Amazon EC2 instance, right-click it and select “Attach”. Please note that the volume will need to be formatted inside the operating system being used.

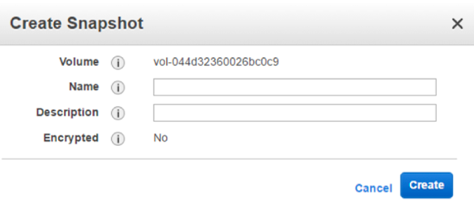

3. Create a Snapshot of an EBS Volume

To move an Amazon EBS volume to another region, create a snapshot. Right-click on the volume and select “Create Snapshot”.

Enter a name for the snapshot. Note that the snapshot will be encrypted if the original volume was encrypted, and vice versa.

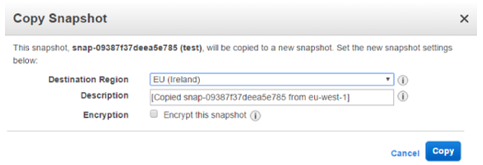

4. Copying EBS Volumes Between Regions

Amazon EBS feature Elastic Volumes allows AWS customers to easily switch between Amazon EBS volume types on the fly and dynamically grow volume sizes.

To copy an EBS volume between regions using the AWS Management Console:

1. Create an EBS Volume (as shown above)

2. Attach the volume to an EC2 Instance (as shown above)

3. Create a snapshot (as shown above)

4. Once the snapshot is created, move it to the other region. In the Elastic Block Storage subsection in the “EC2 Dashboard” menu, select “Snapshots.” Find the desired snapshot, and select “Copy.”

5. Select “Destination Region,” as well as the option to encrypt the new snapshot. In order to utilize the new snapshot in the destination region, change the region in the top right corner of AWS Management Console.

To copy an EBS volume to another region using the AWS CLI:

2. Create the volume using the CLI command aws ec2 create-volume - important arguments are --size, --region, --availability-zone, --volume-type, --iops (when creating SSD volumes) and --encrypted (if securing the data is important).

3. Attach it with the command aws ec2 attach-volume -supply --volume-id and --instance-id arguments).

4. Create a snapshot with the command aws ec2 create-snapshot with argument --volume-id.

5. Move the snapshot with the command aws ec2 copy-snapshot. Provide the parameters --source-region, --source-snapshot-id, --destination-region (optional, but necessary for cross-region copying), --encrypted.

5 Less-Known Functions of AWS EBS Volumes You Should Start Using

Now that we’ve covered the basics of EBS and how to perform operations, here are a few more advanced EBS functions that you may not know about, but should definitely consider using.

1. RAID

Amazon EBS volume data is replicated across multiple servers within the same availability zone to prevent the loss of data from the failure of any single component. This replication makes Amazon EBS volumes more reliable, which means customers who follow the guidance found on the Amazon EBS and Amazon EC2 product detail pages typically achieve good performance out of the box. However, there are certain scenarios where it is necessary to achieve a higher network throughput with much better IOPS.

One way to accomplish this is by configuring a software level RAID array. RAID is supported by almost all operating systems and is used to boost the IOPS and network throughput of volumes of EBS in AWS.

Before configuring RAID, it is important to know how large your RAID array should be and how many IOPS are required. In the most basic terms, configuring a RAID array works by using two volumes as one, using the combined IOPS and network throughput of both volumes.

Things to Remember When Configuring RAID on Amazon EBS:

- Typically, RAIDS types such as 0, 1, 5 and 6 can be configured on AWS.

- As per AWS, the ideal RAID configuration is RAID 0 and 1: the reason for this is because in RAID 0 users can stripe across multiple volumes to gain a better distribution of I/O and therefore better performance.

- In the same way, RAID 1 is used to achieve better availability and fault-tolerant behavior as two volumes are mirrored, with one acting as backup copy for the other in case of a failure.

- Different types of instance resources such as General Purpose, Provisioned IOPS, Throughput Optimized, Cold HDD volumes can be combined together in a RAID 0 configuration. This uses the accumulated available bandwidth of all the volumes to achieve higher throughput and IOPS.

- A diagrammatic representation of the architecture consisting of RAID 0 arrays should look this this:

Why only RAID 0? Why not other RAIDs on Amazon EBS?

AWS also supports RAID 1 on Amazon EBS volumes, which creates a greater fault tolerance capability, and makes it ideal for use cases where data durability is part of the requirement. However, it does not provide write performance improvement because the data is written to multiple volumes simultaneously.

Amazon EBS does not recommend RAID 5 and RAID 6 because the parity write operations of these RAID modes consume some of the IOPS available to your volumes. Depending on the configuration of RAID array, RAID 5 and 6 modes provide 20-30% fewer usable IOPS than a RAID 0 configuration at an increased cost. Using identical volume sizes and speeds, a two-volume RAID 0 array can outperform a four-volume RAID 6 array that costs twice as much.

It is also recommended to avoid booting from a RAID volume as the grub is typically installed on only one device and if any of the RAID array mirror device fails, you won't be able to boot the OS.

Click here for a step-by-step guide for configuring RAID on EBS volume.

2. Tuning Amazon EBS Performance

There are a number of factors that can affect Amazon EBS performance. However, by adhering to a few simple guidelines, you can ensure your cloud storage deployments are as optimal as possible.

- Right-size your volumes: As Amazon EBS performance for all volumes types is directly tied to the size of the storage allocated, it is important to plan ahead for the level of performance that you will require. For example, for gp2, the size of the volume that you create will determine the baseline IOPS that you will receive, which defines the normal performance of the volume after all burst credits have been consumed.

- Choose an appropriate block size: Data block size determines the amount of data processed in each I/O operation and is set when a filesystem is formatted. By increasing block size, it is possible to get more throughput, which can be useful for applications performing large amounts of sequential I/O, such as database systems. However, block size also determines the minimum size of a storage allocation, and so can mean using more space when working with small objects or files.

- Optimize network bandwidth: As Amazon EBS is accessed over the network like a SAN is, it is important to have a high-performance link to the Amazon EBS volumes you are accessing from Amazon EC2. This can be achieved by either using 10 Gb networking or by using Amazon EBS-optimized Amazon EC2 instances, which provide you with a dedicated connection between your Amazon EC2 instances and the Amazon EBS storage volumes you have allocated.

3. Increasing Amazon EBS Volume Size While in Use

Amazon EBS is elastic, which means you can modify the size, IOPS, and the types of volume on the go without difficulty.

To modify the instance size, it is required to stop the instance, take a snapshot of the volume, create a new volume, and attach it.

Recently, Amazon announced that you can now modify Amazon EBS volume size and type while they are in an in-use state, significantly decreasing the effort required to extend or modify attributes of a volume.

Executing this modification of volume size comes at no additional charge, although there is a charge for higher storage. Increasing Amazon EBS volume size also helps in production use cases where there is an application with a database on Amazon EBS.

In the past, DB size would increase, but the administrator would not be able to modify the size as that requires some down time which is not possible in a 24 x 7 live system. Now with this feature, it’s easier to modify the size of a volume. It is important to note that this feature is not available for previous generation instance types.

In general, the following steps are involved in modifying a volume:

- Issue the modification command.

- Monitor the progress of the modification.

- If the size of the volume was modified, extend the volume's file system to take advantage of the increased storage capacity.

For more visit: Modifying the Size, IOPS, or Type of an Amazon EBS Volume.

4. Amazon CloudWatch Events for Amazon EBS

AWS automatically provides data such as instance metrics and volume status checks via Amazon CloudWatch. These are state notifications and can be used to monitor Amazon EBS volumes.

Typically, monitoring data is available on Amazon CloudWatch in five-minute periods at no additional charge. Amazon CloudWatch also provides a monitoring option with for PIOPS (Provisioned IOPS) instance types such as io1; data for such instance types are available on Amazon CloudWatch at every one minute, accessible via Amazon CloudWatch API or the Amazon EC2 console, which is part of the AWS management console.

Amazon EBS has several state notification events for volumes:

- Ok: Normal volume performance

- Warning: Degraded volume performance

- Impaired: Stalled volume performance, severely impacted

- Insufficient data: No information is available on the volume

A user can always configure Amazon CloudWatch alarms to trigger an SNS notification based on a state change. In addition to this, Amazon CloudWatch Events allow a user to configure Amazon EBS to emit notifications whenever a snapshot or encryption state has a status change. Users can also use Amazon CloudWatch Events to establish rules that trigger programmatic actions in response to changes in the snapshot or encryption key states.

In a use case scenario, Customer XYZ wants to ensure whenever a snapshot is created, the snapshot is shared with another account or copied to another region for disaster-recovery purposes. A possible solution is for Customer XYZ to leverage Amazon CloudWatch to establish rules that trigger programmatic actions in response to a change in snapshot or encryption.

Amazon CloudWatch’s CopySnapshot feature, the event is sent to Customer XYZ’s AWS account when an action to copy a snapshot completes. If either event succeeded then it should trigger an Amazon Lambda function which will automatically copy the snapshot from one specified region to another.

Refer following documentation to see a step-by-step guide for implementing triggers on Amazon CloudWatch Events feature: Create Triggers on CloudWatch Events

5. Sharing Snapshots Across AWS Accounts

With Amazon EBS, users can share snapshots publicly and privately. Sharing snapshots publicly means that all the data in the snapshot is shared with all AWS users, hence it is advisable that proper checks be performed before changing permissions of the snapshot.

AWS allows you to share unencrypted volume snapshots privately and publicly. However, encrypted snapshots cannot be shared with all AWS users, only with an approved list of AWS users using custom CMK (customer managed key).

Encrypted snapshots help achieve maximum security between sharer and receiver. Snapshots are encrypted with CMK. To share the snapshot with others, the same custom CMK which was used to encrypt volume is needed.

There are several things to consider before sharing snapshots. Below are some snapshot sharing’s limitations:

- You can share both encrypted and unencrypted volume snapshots between different AWS accounts.

- Encrypted snapshots can only be shared if a custom key has been created and shared with another account. For more visit: How to Copy an Encrypted Volume to Another Account.

A step-by-step guide for snapshot creation can be found here:

How to Create an AWS EBS Snapshot

Faster AWS EBS Snapshots with Cloud Volumes ONTAP

NetApp’s Cloud Volumes ONTAP enables companies to leverage enterprise storage capabilities in cloud environments. By deploying Cloud Volumes ONTAP instances in desired regions and utilizing NetApp’s SnapMirror technology, customers can take snapshots of their data without requiring additional storage or impacting an application's performance, and then replicate those snapshots to another region while keeping them synchronized automatically per a user-defined schedule.

If needed, Cloud Volumes ONTAP can also leverage physical on-premises ONTAP systems as a source or destination, allowing users to backup or restore data to and from the AWS cloud. Additionally, SnapMirror only needs to create a single baseline copy once: subsequent copies after the baseline only replicate the changed data.

Other benefits for using Cloud Volumes ONTAP in AWS is because of NetApp’s cost-saving storage efficiencies such as thin provisioning, data deduplication and compression. These features allow Cloud Volumes ONTAP to consume much less underlying Amazon EBS capacity than otherwise possible on AWS.

These storage efficiencies also persist for the SnapMirror relationships, meaning that the data is replicated in a space efficient manner, which saves you on data egress charges. Another of these features, data tiering, allows AWS users to store infrequently-used data, DR environments, and snapshot data on Amazon S3 until that data is needed, when it is automatically brought back up to performant, low-latency Amazon EBS volumes.

Learn More About Amazon EBS

Amazon EBS is one of the most important storage formats available in the public cloud today, so it is important to make sure you know all the ins and outs of this versatile AWS offering. In this article, we’ve looked at a number of ways that you can manage, configure, and augment your Amazon EBS volumes, including how to get better performance, higher levels of protection, and optimized costs with Cloud Volumes ONTAP for AWS.

There’s a lot more to learn about AWS EBS. To continue your research, take a look at the rest of our blogs on this topic:

EBS Pricing and Performance: A Comparison with Amazon EFS and Amazon S3

Amazon Elastic Block Store (Amazon EBS), Amazon Simple Storage Service (Amazon S3), and Amazon Elastic File System (Amazon EFS) are all different storage systems provided by Amazon. Although all three use Amazon EC2 instances, they vary widely when it comes to parameters such as cost, performance, throughput, compute resources, access control, scalability, and durability.

It’s essential to get to know these differences in order to select the optimal storage option for your infrastructure and business requirements. Whichever option you choose, Cloud Volumes ONTAP provides an array of features designed to boost the performance of your selected storage, ensuring high availability and lowering costs.

For the full comparison, read EBS Pricing and Performance: A Comparison with Amazon EFS and Amazon S3 here.

Storage Tiering between AWS EBS and Amazon S3 with NetApp Cloud Volumes ONTAP

In AWS, Cloud Volumes ONTAP uses Amazon EBS for its storage layer, providing added data management capabilities for this highly performant storage type. But what about the data that is required by a company but not necessary to keep on this pricey storage service?

Cloud Volumes ONTAP’s automated storage tiering feature makes it possible for AWS users to send infrequently accessed data, such as snapshots, DR copies, and inactive data to a capacity tier on Amazon S3. Amazon S3 costs are much lower, which means as long as the data isn’t required for active use, users save. When the cold data is accessed, Cloud Volumes ONTAP automatically returns it to Amazon EBS.

Read more in “Storage Tiering between AWS EBS and Amazon S3 with NetApp Cloud Volumes ONTAP” here.

AWS Instance Store Volumes & Backing Up Ephemeral Storage to AWS EBS

On AWS, instance stores are a type of short-term block-exposed storage that exists on host-machine disks. This temporary storage type can be composed of one or more instance store volumes. However, don’t expect anything stored in this format to last: once the instance terminates, so does all the data that was stored on it.

The cloud is mostly built around persistent storage, so using instance stores requires a dedicated backup mechanism in place. How can users work around a challenge like this? Do instance store volumes compare to Amazon EBS volumes performance-wise? Find out here.

Read “AWS Instance Store Volumes & Backing Up Ephemeral Storage to AWS EBS” here.

AWS EBS and S3: Object Storage Vs. Block Storage in the AWS Cloud

AWS storage offerings started with object storage offered by Amazon Simple Storage Service (Amazon S3), and soon followed up with the block-level Amazon Elastic Block Store (Amazon EBS). These are two of the most popular services on AWS, but do each offer the same benefits for every use case? In this article, we put the AWS object and block storage offerings head to head and compare them in terms of access, scale, speed, versioning, and (last but not least) price.

Which will come out on top, object or block? In either case, Cloud Volumes ONTAP gives you added benefits for using either storage service on AWS.

Read “AWS EBS and S3: Object Storage Vs. Block Storage in the AWS Cloud” here.

AWS EBS Volume Backup with EBS Snapshots

Your Amazon EBS volume backup is an essential part of deploying on AWS. Data loss equals financial loss, but with the most demanding deployments, backups will need to be created at a near-constant basis in order to make sure that data is never lost in the case of a disruption. Automation is a useful component to building a backup solution that leverages AWS EBS snapshots.

This article shows you how you could use the AWS CLI to schedule automated EBS snapshots. You’ll see how to snapshot Amazon EC2 instances, use and create permissions tags for snapshots, tag AWS EBS instances, and the scripting that can be used to carry these out using the AWS CLI.

Read “AWS EBS Volume Backup with EBS Snapshots” here.

Cloning Amazon EBS Volumes: A Solution to the AWS EBS Cloning Problem

Companies measure success today by how fast projects get off the ground and out to the customers. An important part of that is finding a way to increase the number of tests that can be run during the development process on a per-hour basis. More tests mean better code, and better code means a better product. For developers using Amazon EBS though, this can be an issue—AWS doesn’t offer a native service for cloning Amazon EBS volumes.

This article will look at some of the AWS-specific workarounds for this missing feature, and also offer a solution from NetApp: instant and space-efficient data clones with FlexClone technology and Cloud Volumes ONTAP.

Read in "Cloning Amazon EBS Volumes: A Solution to the AWS EBS Cloning Problem".

Lowering Disaster Recovery Costs by Tiering AWS EBS Data to Amazon S3

It finally happened: a major disaster has taken your operations completely offline. If you’ve taken the right steps, your disaster recovery plan should be kicking in, and you’ll be on the way to failing over to your secondary copy in the cloud. Pretty soon, the problem will be solved and you can return to normal operation. But how much did all of that cost you?

Every company should have a disaster recovery plan in place, but they should never ignore the costs involved. Since disaster recovery copies can be as massive as the primary environment and all the data it includes, they’re expensive to store. Cloud Volumes ONTAP can help lower disaster recovery costs through a combination of features.

Find out how in “Lowering Disaster Recovery Costs by Tiering AWS EBS Data to Amazon S3.”

The Largest Block Storage Volumes the Public Cloud Has to Offer: AWS EBS, Azure Disks, and More

When it comes to block storage, the offerings in the public cloud just keep getting bigger. Looking beyond the different Amazon EBS volume types offered by AWS, this article also examines the different highly performant block storage disk types on Azure.

Which will be the best for your use case from a performance and a price standpoint? In this article we compare the service offerings by use case, volume size, max IOPS, max throughput, and price to see which comes out on top.

Read “The Largest Block Storage Volumes the Public Cloud Has to Offer: AWS EBS, Azure Disks, and More” here.

3 Tips for Optimizing AWS EBS Performance

It’s not easy to optimize Amazon EBS performance. Between all the factors that can be used to measure performance, be it I/O, throughput, or latency, what optimization often comes down to is how much money can be saved using a volume without impacting performance.

What benefits can your Amazon EBS volume leverage from sizing volumes correctly, configuring the best RAID configurations, and using Fio benchmarking? Find out what Amazon EBS optimized performance looks like with the help of these three tips.

Read “3 Tips for Optimizing AWS EBS Performance” here.

Are You Getting Everything You Can from AWS EBS?: Optimizing Your Amazon EBS Volumes

In the quest to get the highest rate of performance out of Amazon EBS volumes, there are a few AWS-native solutions that can be used, but what role can Cloud Volumes ONTAP play in making your Amazon EBS volumes perform with greater efficiency?

In this post we’ll look at some of the benefits that Cloud Volumes ONTAP has for Amazon EBS volume performance: striping volumes, reducing disk consumption, and freeing volumes from high latency demands of snapshot creation, and more.

Read “Are You Getting Everything You Can from AWS EBS?: Optimizing Your Amazon EBS Volumes” here.

See Additional Guides on Key IaaS Topics

Together with our content partners, we have authored in-depth guides on several other topics that can also be useful as you explore the world of IaaS.

AWS EFS

Authored by NetApp

- AWS EFS: Is It the Right Storage Solution for You?

- AWS File Sharing with AWS EFS

- EFS Backup Methods with AWS, Open-Source, and Cloud Volumes ONTAP

AWS FSx

Authored by NetApp

- AWS FSx: 6 Reasons to Use It in Your Next Project

- FSx for Windows: An In-Depth Look

- AWS FSx Pricing Explained with Real-Life Examples

Cloud Cost

Authored by Spot